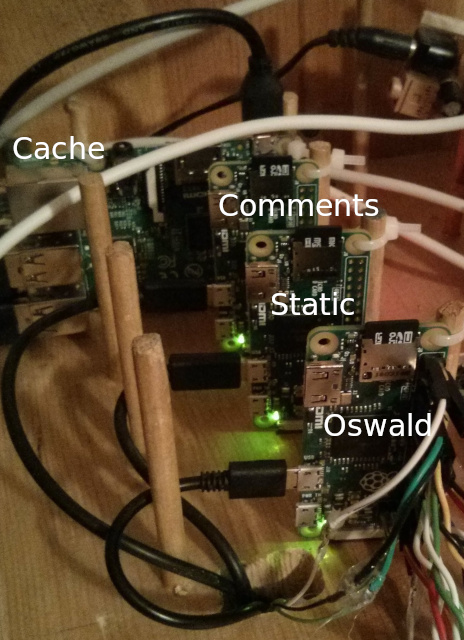

↑ OswaldCluster

Page Created: 7/30/2014 Last Modified: 11/27/2025 Last Generated: 5/4/2026

The computer cluster that is serving this page consists of one Raspberry Pi B+, two Raspberry Pi Zeros, one Raspberry Pi Zero W, and one Raspberry Pi Pico 2 W that inter-operate:

- Cache - An IPtables firewall and NAT router which also performs Memcached and dnsmasq caching

- Static - A static server running ScratchedInSpace that is also a wireless NAT router and access point

- Pico - A Spartan Smolnet server that connects to Static over wifi

- Comments - A dynamic comment server running ScratchedInTime

- Oswald - An XMPP control server running OswaldBot that passes audio through DeadPiAudio.

- Overview

- How It All Began

- Adding Minimal Dynamics

- The Difficulties of Spam

- Hardware and Software Configurations

- The Pi Model B+ to Pi Zero USB Ethernet Gadget Router

- Single 2A USB Power Supply

- Overclocking the Pi Model B+ to 1 GHz

- Disabling the Pi Model B+ USB Current Limiter

- Staggering the Boot and Shutdown Sequence of USB Gadgets and Host

- Disable HDMI

- Connect to USB Serial Gadget Console

- Udev rules

- Cache

- Static

- Pico

- Comments

- Oswald

Overview

It is a Perl, Python and Bash-based, static wiki generator with document-oriented, NoSql-style architecture, including tags, backlinks, metadata, search, recursive macros, transclusion, breadcrumbs, blog, wiki-like editing, combined with a dynamic commenting system with cryptographic ID, remote monitoring and control, Atom feeds, and captcha.

It integrates with FastCGI, reverse proxy, caching, Textile markup, Memcached, Bogofilter Bayesian spam filtering, and XMPP.

This is the third largest project I have ever undertaken, the largest being my LandmarkFilm and its production system, and the second largest being TrillSat.

ScratchedInTime is the 3rd and largest Perl script I have ever written (ScratchedInSpace being the 2nd, and IdThreePlugin being the first), and I never wanted it to be that large. Where ScratchedInSpace is tiny and focused, ScratchedInTime is large and does a lot of different things. I never wanted to write a CGI (or FastCGI) script, but it was the only way to allow user commenting on my static site without resorting to a huge dynamic system or a 3rd party.

How It All Began

Back in 2013, OswaldBot worked so well that I thought, "Hey, maybe I can run Foswiki on these things and run my BashTalkRadio on them". But even with Foswiki page caching and Memcached enabled and replacing Apache with Nginx, it was still too slow. The Perl language that Foswiki used was fast, but its dynamic nature was still too much for the 700 MHz ARM.

That really bothered me and meant I would have to keep my wiki on a larger, more complex and power hungry server.

So, I thought, "I've always wanted to create my own wiki engine, maybe I can create a NoSQL, document-oriented-style, structured wiki in ultra-fast C language!" I started reacquainting myself with C and then realized that string processing (used heavily in such wikis) is nightmarish in C.

...but then I realized that Perl is ultra-fast at string processing and can sometimes be faster than C (unless you're a C guru) due to design efficiencies... hmm, perhaps that is why the Foswiki guys used it? I did some research on Perl and really liked what I read, but then realized I would hit the same performance problem as Foswiki, generating those pages dynamically.

Then, I thought--what if I generate the dynamic pages on the (more powerful) client, and just put the static, pre-generated versions on the Raspberry Pi? Wow! I thought that someone else must have surely thought of this idea, and sure enough, a lot of Python, Ruby, and Perl programmers had created their own "static site generators" to do that very thing.... but... did they incorporate macros, recursive search and NoSQL database features?

Most of them did not. It appears the static site crowd handles this using various templating systems and other methods.

So as I delved into this idea further, I realized that creating a static site generator was actually much easier than creating a dynamic, CGI-based wiki. You pretty much design a parser to interpret any markup of your choice (just think up your own) and turn it into HTML. This was my motivating factor for putting Perl to the test.

I named it ScratchedInSpace, and it worked better than I imagined. Perl will easily call itself recursively, so I was able to integrate recursive rendering and macros. And my plugins are simply Perl subroutines substituted in place of my macro markup. This is an immensely powerful wiki structure, essentially a document-oriented database. It is, however, very fragile and not good coding practice. But why do I care? It's just a static site generator running with user permissions on a Linux client PC. It's not server-side code. So I found that static site generators are really neat things...

Adding Minimal Dynamics

When I set out to design it, I decided I would not have a commenting system, but from what I learned from the OscarPartySystem , I knew that it was too much of a security risk, too hard to maintain, too hard to code, and would simply bog down the little Pi. But many people believe that a comments forum is important to a site, that it provides valuable input from others.

Most people with static sites handle this via a 3rd party service such as Disqus. But this seemed like it was violating the principle of building such a system in the first place, and I didn't want to send my guests to a 3rd party.

So I decided for isolation and speed to build my first dynamic, CGI program on a separate Raspberry Pi, and use a 3rd Pi to run a Memcached server, which I would use as a form of high-level IPC. Memcached turned out to be far more useful than this as the project progressed, allowing Nginx caching, ring buffers, CGI session tokens and tarpit timing.

The Difficulties of Spam

Writing a CGI comment page is easy. Keeping people from hacking it into oblivion, being enslaved by their bot army, or being tricked by them masquerading as people you know is hard.

Most of my time working on this project was spent on this problem, which I broke down into the following:

- Create a captcha system to weed out the bots

- Validate and restrict the input

- Create a tarpit minefield

- Use Eric Raymond's Bogofilter for Bayesian content filtering

- Create a way for me to monitor and flag spam remotely

- Set size limits on comments pages

- Provide a cryptographic ID to people that want them

I had to create a system that would keep the resources of the Raspberry Pi low, keep it easy for me to manage. And because I did not incorporate a user account system, if the system did get compromised, nothing of value is lost (no private data would be exposed).

I eventually got it working, and named it ScratchedInTime.

So, by the time I was done, I had 3 servers, Static, Comments, and Cache, and then there was OswaldBot , dutifully running the whole time, which I had been ignoring while my mind was deep in Perl land.

What if... OswaldBot could send messages to the comments server? Then I could use my phone to text commands to that server via proxy.

So I added this ability to OswaldBot.

Hardware and Software Configurations

Since June 2022, all servers are running on Void Linux using musl and runit, replacing Arch Linux ARM using glibc and later systemd that I was using since 2014 (Oswald since 2013) after Arch Linux ARM dropped ARMv6 support in February 2022↗. All servers are running headless, with no GUI. My code is not portable and there are some peculiarities that only work on my OS configuration.

During the 5-year period between 2014-2019, I ran all servers on 4 Raspberry Pi B computers, replacing two of them with B+ after I accidentally damaged them. I routed the entire site through an old consumer wifi router, but I disabled the wifi radio and used the built-in Ethernet ports. This router ran a 240 MHz Broadcom BCM5354 rev 3 CPU, and it ran the Linux 2.4 kernel. Occasionally, I would also have to add a small switch to increase my ports when needed such as when port 3 on the router burned out in 2019. Each Pi ran at 700 MHz and had its own USB power supply, and occasionally one of the power modules or MicroSD cards would fail and I would replace it. At that time, Cache was only acting as a Memcached server and did little else. I simply needed 512 MB of memory for caching the other servers.

You can see the original configuration here:

The Pi Model B+ to Pi Zero USB Ethernet Gadget Router

But in 2019, after a hardware failure burned out my only Pi 3 that I used to generate the pages for this cluster, I started looking at how to make better use of the hardware that I had on-hand, and I had 3 Pi Zeros that were not used. While they were faster and inexpensive, I had previously ignored these computers for networking since they lacked Ethernet connections. Even the Pi Zero W wasn't sufficient, as I didn't want to use an unreliable and potentially slower connection.

I was very familiar with the Pi Zero and Pi Zero W, having used both of them in my TrillSat project, but I couldn't find a reason to use them in this Internet cluster until I started reading more about "USB Ethernet Gadget" mode. Then it hit me--I realized that I could run Ethernet over the USB cables themselves. Normally this is not a good thing, as they would all be competing for USB bandwidth, but it is actually not much different than the Ethernet on the Pi B+, for example, that internally runs its Ethernet adapter over USB. And then I noticed something fascinating--the Pi B+ uses less power than the original Pi along with more USB ports (4), and those Pi Zeros use such low power that they can be powered over USB... all of them. There is a configuration option that allows you to remove the current limiter on the Pi B+ USB ports, and as long as your power supply is sufficient, you can power all Pi Zeros on those ports.

This is significant, because it allows you to do something that was not possible on Pis with only a single Ethernet adapter--turn it into a router! It was at this point that I decided to have Cache also perform all routing functions for the cluster. I had built my own routers in the late 1990s and early 2000s using old 10 Mb NICs and things like IPchains, LRP, LEAF, or later BSD-based m0n0wall, attaching an old 10 Mb hub (since my Internet DSL speeds topped out at 1 Mb anyway, the upload speeds being even smaller), but once the inexpensive consumer wifi routers started appearing in the 2000s there was no need to build them anymore.

So the current page you are reading right now on greatfractal.com is being served from the $5 Static Pi Zero that you see in the image above, and the traffic is being passed through Cache directly to the Internet to reach you. And the overclocked Broadcom CPU in the Pi B+ that is routing the traffic is much faster than the Broadcom CPU in my old router, along with much more memory. And the entire assembly uses much less power and much less equipment, all from a single 2 Amp USB power supply. I eliminated the external router, switch, and USB audio device, along with multiple power supplies, power strip, and Ethernet and USB cables.

Note that the PC board with the transistors and relays shown in the photos is a control board that I built years ago for various projects. The board behind that, with the volume and tone knobs, is an audio amplifier (which contains a 22 Watt TDA7360 stereo audio amplifier chip suitable for car stereos) that I removed from an old speaker system. The black box on the lower left is OswaldLaser, and the coiled wire on the right in the image at the top of the this page is a DS18B20+ temperature probe that I use to measure temperature. I had this part left over from my TrillSat project and decided to add it to keep an eye on my home temperature.

In November 2025, I replaced the Pi Zero for Static with a Pi Zero W, which serves the exact same function for the cluster (I just swapped out the board) yet it included a needed Wifi radio. I don't use this cluster over Wifi (it's wired only) for this site, except that when I needed to add a Pi Pico 2 W to my router to allow it to server Spartan pages on a sister site as part of my Louia ObScura project, I had no free USB ports left and the Pico 2 W was designed for Wifi networking, so I figured the best option was to have the Static server act as a Wifi Access Point for that Pico 2 W. This required that I create a second router (since the Pi Zero W lacks 4addr bridge capability) and then add hostapd and dnsmasq.

This current configuration with the two 1 GHz Pi Zeros, one 1 GHz Pi Zero W, and the Pi B+ acting as a router is much faster and more responsive than the previous four Pi B (or B+) boards with external 240 MHz router. The total power usage may be similar to a quad-core Pi 2 connected to my old router, but it is better in several ways, as virtualizing the servers would use up precious resources, containerization would add complexity, and four Pis at 512 MB each = 2 GB RAM, twice as much as the 1 GB Pi 2. Also the storage speeds and capacity in the Pi Zero cluster are quadrupled, since the 4 flash drives are running in parallel. The sharing of USB for Ethernet is not a disadvantage here, either, for most of the traffic had to be filtered through Static anyway, and Cache and Comments were using Static as the frontend. Also the elimination of the USB audio on Oswald by DeadPiAudio improves its USB bandwidth as well.

Previously, on my old router, all servers shared the same broadcast domain and the same network segment (but the internal switch prevented them from sharing the same collision domain). If you've ever worked in corporate IT, you've likely heard of VLANs. I first worked with the concept in 2002, but even consumer routers running things like dd-wrt support them now, which allow you to create multiple layer 2 broadcast domains. But since I had only 4 servers, and later added a 5th tiny Pico, I wasn't too worried about having too much internal network traffic.

But since I've connected the three Pi Zeros in USB Ethernet Gadget mode and assigned different subnetworks to them, each micro USB "NIC" and network segment acts as its own LAN with its own broadcast domain. In my case, a 700 MHz Pi Model B+, which I later overclocked to 1 GHz to match the Pi Zeros, is acting as a layer 3 router and not a layer 2 switch (which could have been configured using Linux Ethernet bridging mode) which is essentially what was occurring before with the old router. So, while the CPU is faster, the segmentation also reduces internal traffic and takes a load off of those Pi Zero CPUs.

One of the limitations of USB gadget mode on the Pi Zero computers is that they have no USB hub (and adding one will block gadget mode), and so you cannot add additional USB devices to them in this mode. So any USB devices have to be plugged into Cache.

I left Cache in place, still running on a Pi B+, but it was a nice feeling swapping out the other three Pis with Pi Zeros. Two of them were the original Pi B boards that have been running for 5 years straight, but it was nice knowing that I'm using much less power now. But even if I get nostalgic, lying down flat is one of those original Pi B boards that I damaged years ago, with only its audio filter and jack in use in DeadPiAudio.

Single 2A USB Power Supply

Previously, I liked the redundancy of multiple power supplies, but what I found was that it was just more points of failure and more cost, since the cluster was not very functional if a single server was down anyway, and I usually restored it quickly enough. Now if that power supply fails, I have a set of them that I can keep on hand to use immediately.

Overclocking the Pi Model B+ to 1 GHz

After my Pi 3 desktop computer burned out as mentioned earlier, I moved my Pi 2 from my PacketRadio project to replace it and then moved one of the Pi Model B+ boards to replace the Pi 2, but I needed more speed for the Dire Wolf software modem and had to overclock the Pi Model B+ to 1 GHz in TRILLSAT-G (and set turbo mode) for better reliablity. This seems to work without issue, so I decided to overclock Cache on this project as well (without setting turbo mode) to improve performance even further and added the following to my /boot/config.txt file to match the default values of the Pi Zero as listed on their official overclocking options↗ page:

over_voltage=6

arm_freq=1000

gpu_freq=400

core_freq=400

sdram_freq=450Since the Pi Model B+ and Pi Zero were released about a year apart and use the same model CPU, the CPU reliablity of that overclocked 1 GHz Pi Model B+ running on Cache is probably similar to the reliablity of the 1 GHz Pi Zeros running in Static, Comments, and Oswald.

Disabling the Pi Model B+ USB Current Limiter

In order to ensure that I didn't run into problems with the Pi Zeros drawing too much total current from the Pi B+ USB ports, I had to disable the default current limiter by adding max_usb_current=1 to /boot/config.txt.

Staggering the Boot and Shutdown Sequence of USB Gadgets and Host

After setting up the g_cdc gadgets (combined Ethernet + Serial) on the Pi Zeros, one of the downsides of powering up the three Pi Zero boards from the USB ports on Cache is that they all max out their current draw at one time, causing voltage dropouts for my single 2A supply which causes the red LED on the Pi B+ to flicker off, potentially making the cluster more unstable.

Another downside is that the Linux udev system on Cache will begin to assign USB0, USB1, USB2 to each virtual Ethernet adapter in order of when it first sees them, mixing up the order if there is a slight boot difference.

But one of the upsides is that you can stagger them by adding boot_delay= statements in /boot/config.txt for the number of seconds you need to delay. The Pi B+ won't actually power up the USB ports immediately but they will turn on several seconds into the boot and then the delays will start up. I also noticed that the Pi Model B+ can programmatically control power to the USB hub, which is a nice feature.

And I also had to handle the reverse--create a safe way of shutting down the servers. Previously, with all 4 servers behind an external router, it didn't matter how I shut them down, but now I have to make sure to shut down the Pi Model B+ acting as router last, for if I shut it down too early, it will cut power to those USB ports, abruptly pulling the power plug on those three Pi Zeros, leaving their file systems in inconsistent states. I'm so used to typing "shutdown now" that I had forgotten that this command can also do neat things like "shutdown +1 minute" which exits back to the shell immediately, yet queues up that command for 1 minute, without the need to use a sleep command or use an external timer like atd. This causes Cache, the host server, to wait until the Pi Zeros are all shutdown first.

There is also the possibility of the Pi Model B+ locking up and preventing me from accessing those Pi Zeros via ssh to initiate a graceful shutdown. Unlike a normal Ethernet router, I can no longer just reboot the external router to re-establish an Ethernet connection, as it seems to shut off USB power. And since it would be cumbersome to connect keyboards or serial consoles to each of them, what I need is something like a low-level magic sysrq-like mechanism to tell the kernel to shutdown using a GPIO pin instead of a keyboard key. Thankfully, there is now a dtoverlay=gpio-shutdown line, along with some parameters, that can be enabled in /boot/config.txt to generate KEY_POWER events, and in the future, I plan to add a small ground switch to GPIO pins to act as a manual override which gives me time to shut them down before I reboot the Pi Model B+ router.

Disable HDMI

Running /opt/vc/bin/tvservice -o disables HDMI, saving about 25 mA per board (100 mA for all 4) taking more stress off of that power supply. I added this to /etc/rc.local to disable it at boot.

Since no HDMI console exists on the main Cache server, however, if I cannot access it over the network, I have to use /dev/ttyAMA0, the UART serial connection and a set of 3 wires to access it over a minicom terminal.

Connect to USB Serial Gadget Console

Once they boot-up, the serial devices begin to show up as /dev/ttyACM0, /dev/ttyACM1, /dev/ttyACM2, again the order based on how the host Pi B+, called Cache, first sees them. I found that the serial devices were necessary to perform all of the network configuration headless to avoid powering them down and swapping MicroSD cards back and forth just to edit configuration files.

Udev rules

I created Linux udev↗ rules to assign unique names to the network devices instead of relying on the staggered boot sequence (which is still not 100% reliable), but the MAC address on the USB Ethernet gadgets are randomized on each boot, so I had to use the modprobe options for g_cdc to set the host_addr (the ones that show up on Cache and must be unique) and dev_addr (the one that shows up on the Pi Zero and can be the same) MAC addresses. Udev allows you to rename devices and even run commands but you have to be careful of the sequence. So I used udev on Cache to bring all 3 IP links up and even assign IP addresses to them, eliminating the need for any DHCP server.

On each Pi Zero, I use ip link/addr/route commands in the /etc/rc.local file to enable the virtual Ethernet device and set the static ip and default gateway on boot.

So what happens is, for example, after the stagger delay, Static boots up, assigns itself a static IP and presents a MAC address to Cache. Udev on Cache sees the device MAC, renames it to Static, and uses the ip link set and ip addr add commands to assign a static IP for the same subnet that matches the Pi Zero subnet.

A similar situation occurs when Oswald and Comments boot up.

Once all the gadgets are up and all the IP addresses are assigned to their individual subnets (three of them), then it is just a matter of setting up IP forwarding, masquerading, firewall rules, and dnsmasq DNS caching on Cache, like any typical Linux router/firewall/gateway.

Cache

Cache acts as the primary Internet router using IPtables (IPv4 only), dnsmasq for DNS caching, and IP forwarding and masquerading to act as a NAT gateway. It also runs Memcached, to allow as much of the 512 MB memory as possible for Memcached. It is used by Nginx to cache pages stored by ScratchedInTime, is used as a type of high-level IPC between ScratchedInSpace and ScratchedInTime, and between ScratchedInTime and OswaldBot, and is used by ScratchedInTime to store persistent variables for FastCGI access.

I considered turning Cache into an Ethernet bridge at first, as bridges tend to decrease CPU usage over layer 3 routing, and I wanted to minimize CPU usage. Ebtables is an interesting layer 2 firewall that could be added in this case. But then I realized that all devices would have to go into promiscuous mode and share a single IP, acting as a single computer in some ways. One of the bad things about doing this is that all incoming traffic would be received by each device, so while it eased the burden on the routing PC, Cache, it increased the burden on Static, Comments, and Oswald to filter out the traffic, and those servers needed more CPU cycles than Cache since they had computationally-heavier tasks. So I went with layer 3 NAT routing to keep the traffic quiet for those servers until something is actually directed to them.

After boot, Cache operates primarily in memory with very little need to access the flash storage.

Since Cache has one free USB port after Static, Comments, and Oswald are connected, this port can be used to connect external devices or even a hub. As long as these devices can be accessed over IP by the other servers and have moderate latency/bandwith requirements, it's as if they are connected directly to the other server. A lot of Linux software that connect to USB devices contain tiny IP servers to allow network access.

Static

Static is a purely static web server running Nginx for speed and low resource usage. There is no CGI or FastCGI running on it. It also performs 3 additional functions. For static ScratchedInSpace pages (the main site), it uses the Nginx front side caching which I directed to tmpfs ramdisk, minimizing the need to pull from disk. Disk is slow on the Raspberry Pi sdcard, but 512 MB memory is high enough for the size of my static pages.

For dynamic pages (the ScratchedInTime comments and blog), it pulls from the Cache server running Memcached. And if Cache goes down, it pulls from Comments directly. This "proxies" my Comments server and keeps it from being exposed to the Internet directly for security reasons and for speed. I had to reduce the CPU processing of the dynamic server except in cases where I needed it to actually process, such as generating a new comment or blog.

As mentioned above, it now also partially acts as a Wifi router for Pico.

Pico

Pico is the Pi Pico 2 W server (an OS and Spartan Smolnet server I created from scratch in MicroLua on an RP2350 MCU) that connects to Static over a wireless 802.11n 2.4 GHz link to serve Spartan pages to a sister site at spartan://greatfractal.com. It's the only server in the cluster that I can move around anywhere within range, as it is a tiny wireless board that only needs a small amount of power over microUSB. It's eerie seeing it dangling on the end of its power cable or plugged into a rechargeable USB power bank knowing that it's even smaller than those Pi Zeros and yet still serving pages to the Internet (and it multitasks, so it can serve pages concurrently). One day I may try running the thing on solar power.

Comments

Comments is a web server running Lighttpd. I chose Lighttpd since it has built-in support to autospawn FastCGI, which Nginx didn't have. I run FastCGI on it to run ScratchedInTime, since it is more efficient than CGI and is less of a burden on the CPU.

Oswald

This is the speaking XMPP control server called OswaldBot that uses DeadPiAudio described below. See the OswaldBot page for more information.

Dead Pi Audio

I had previously used a USB CMedia-based audio device for OswaldBot instead of the internal Pi Audio due to the lack of ability to mute the left/right channels independently (causing a loud click due to voltage differences), but after I realized that the Pi Zero had no audio output (and that I had to build my own circuit), I figured out how to achieve channel muting in my DeadPiAudio project and was able to eliminate the USB audio device completely by re-using an old, burned-out Raspberry Pi Model B for its audio filter and jack.

Comments